Everyone is a puzzling mixture of intelligence and irrationality. On the one hand, to be a human being is to be a kind of generalist problem solver: People can learn to speak multiple languages, play complex games like chess, drive cars, use tools, and live in complex societies.

On the other hand, everyone routinely makes mindless, obviously-wrong-in-retrospect mistakes in their everyday lives, such as forgetting to carry an umbrella on a rainy day. So, how can intellectual achievements and cognitive errors co-exist in a single mind?

Getting Mentally Stuck

One cognitive error that has puzzled psychologists is "functional fixedness," which occurs when a potentially useful but unfamiliar way of interacting with an object is overlooked. This insight goes back to the 1940s, when Karl Duncker presented people with a box of tacks, a candle, and a book of matches, and told them to mount a lit candle on the wall. Time and again, despite the simplicity of the problem, people fail to solve it.

The solution? Empty the box of tacks, mount the box to the wall with a tack, and then place the lit candle in the mounted box. In hindsight, it's obvious, but people miss it because they overlook the fact that the box can be used to support the candle. They get stuck thinking of the box as a container for the tacks. They're "fixated" on that "function" for the box.

It isn't that people aren't thinking enough. Participants try a variety of creative (but ultimately incorrect) solutions, such as melting or tacking the candle to the wall. And it isn't as if people don't know that a box can be tacked to a wall. They all accept the right answer as a valid solution that they should've figured out. So, what kind of failure is it?

Uncovering the Reasons for Fixedness

Recently, my colleagues and I developed a computational theory of why people succumb to errors like functional fixedness. We proposed that it hinges on two psychological factors: people's tendency to strategically simplify problems and their avoidance of switching between simplifications.

Imagine you're walking somewhere in a city, and you come upon a building in your way. You can think of that building in at least two ways: either as a single, large obstacle that you must navigate around or as an intricate network of rooms and hallways that you might be able to navigate through. Conceptualizing a building as a single massive obstacle is clearly a gross simplification of reality, but it is also generally useful: Most of the time, people don't walk through someone else's living room to get to school or work, so why should they bother even entertaining those possibilities?

Our theory predicts that once you've become accustomed to a particular simplification of reality, you will have trouble switching to a new one even when it would be more useful to do so. Switching is hard because it takes effort to reconfigure how to think about a problem. It's a bit like the effort involved in switching between speaking different languages.

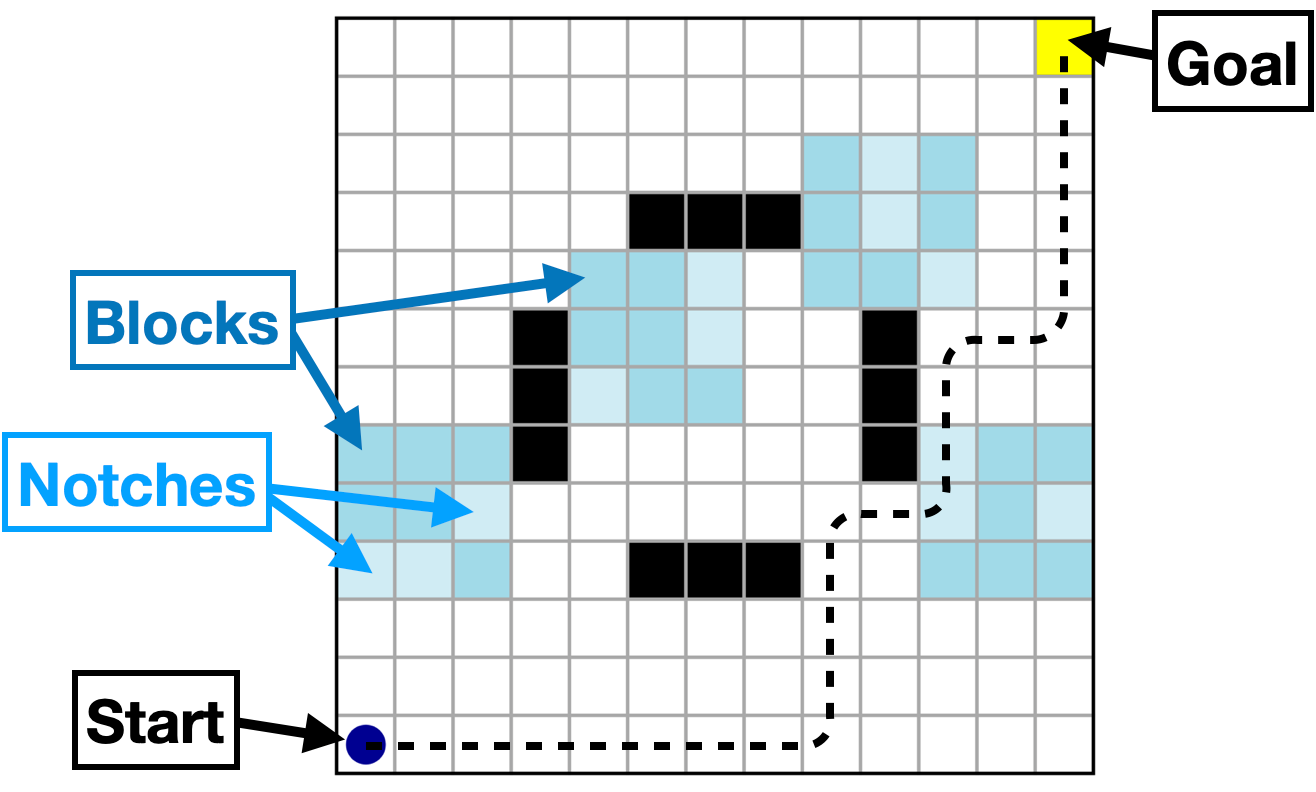

A maze that participants could complete in which the notches could be used as shortcuts.

To test our theory, we developed a simple task in which people had to find a path through a maze by navigating around blocks that had notches in them. In the beginning, the notches were not particularly useful—they were just part of the blocks. But in the mazes they encountered later, those notches could be used as shortcuts.

We let people freely complete these mazes. But we also built computer programs that navigated the mazes according to principles that came from our theory about people's tendency to simplify and stick to those simplifications. By comparing people's actual movements and reaction times to the way our computer programs moved through the mazes, we could pinpoint which thought processes were most likely to have caused them to take a certain path.

As we predicted, people tended to strategically simplify the mazes by ignoring the notches when they weren't useful. But this also means they became functionally fixed on the blocks as obstacles and persisted in this simplification even when it would have been useful to switch.

Getting Unstuck?

Thinking about situations in rigid, inadequate ways is certainly not a new problem. Whether it's forgetting that a box can do more than hold tacks or failing to appreciate that buildings are more than just roadblocks, people often get stuck in one way of thinking.

As our research shows, these errors follow from the reasonable desire to simplify the world and the ease of sticking with an initial simplification. This suggests that one way to avoid functional fixedness is to always stay open to different ways of simplifying the world and, when faced with a seemingly unsolvable problem, know that taking the mental effort to see things differently can often be worth it.

For Further Reading

Ho, M. K., Cohen, J. D., & Griffiths, T. L. (2023). Rational Simplification and Rigidity in Human Planning. Psychological Science, 34(11), 1281-1292. https://doi.org/10.1177/09567976231200547

Duncker, K. (1945). On problem-solving. Psychological Monographs, 58(5), i–113. https://doi.org/10.1037/h0093599

Ho, M.K., Abel, D., Correa, C.G. et al. People construct simplified mental representations to plan. Nature 606, 129–136 (2022). https://doi.org/10.1038/s41586-022-04743-9

Mark Ho is an Assistant Professor at Stevens Institute of Technology. His research combines approaches from cognitive science, social psychology, and computer science to study how people and machines solve problems.